The Challenge of Arbitrary and Changing Spatial Data

Predictable Data – A Well Understood Problem

When it comes to moving data we all like predictability. Our technology grew up solving problems in which the schema and data format were predictable. If you give a member from the League of Spatial Superheroes a data challenge in which both the data structure/schema and the data format are predictable, static, and known – then they can tackle the problem.

Unfortunately, the world is not always this clean and good and there are two data villains at work seeking to wreak havoc in your workplace with change and unpredictability.

Change is Constant in the World of Data

The first data villains are the collective known as the ShapeShifters. The ShapeShifters are hard to identify but their goal in life is to wait until a workflow functions well and then almost immediately introduce change to database schemas and other systems. And sometimes they don’t even wait for things to start working before they institute change.

The first data villains are the collective known as the ShapeShifters. The ShapeShifters are hard to identify but their goal in life is to wait until a workflow functions well and then almost immediately introduce change to database schemas and other systems. And sometimes they don’t even wait for things to start working before they institute change.

We have seen the ShapeShifters at work at both companies and government. Indeed, if there is one thing that is constant it is the evolution of corporate IT systems to better serve organizations. These changes impact the schema of the data, as data is there to support the applications upon which the organizations depend.

Is it possible to make our data-moving scripts more resilient to change? Is it possible to create scripts that are impervious to any and all changes? The answers are yes, and sometimes.

Preparing for the Unpredictable

The second data villain is the ever present, Doctor Arbitrary. The good doctor’s trick is to go out of his way to specify as little as possible and then expect systems and members of the league of spatial superheroes to just “figure it out and make it work!”. Enterprise and industry standard data models are something that he’s steadfast against. He hides behind the argument that standards get in the way of innovation and flexibility, but that’s just his cover. Don’t be fooled!

Like the ShapeShifters, Doctor Arbitrary is alive and well everywhere you look!

We have seen him at work at utility companies where there’s variance among CAD drawings. These variances could be caused by a number of things: from evolving CAD usage to things like CAD user preferences in which each operator does things slightly differently. It could also be because the organization was simply giving hard copies to their employees and the details of the file structure weren’t deemed to be relevant to the efficiency of that workflow.

Other organizations have used file-based GIS tools in which data model was merely a guideline. This resulted because there was no corporate database or applications that enforced or depended on a consistent data model.

Taken to the extreme we have seen cases where organizations have “associated” spatial data files in a variety of formats and schemas from multiple sources. A common example is a local government that in the past was happy just to get any spatial information from their contractors.

Other times a user is tasked to simply create a single process in which every dataset in format X is duplicated in format Y while preserving all the spatial and attribute data in the process.

Taking Control of Change and Unpredictability through the Power of Run-Time Data Interpretation

Fortunately we have been at the work developing new tools for the league of spatial superheroes to better equip them to render these data villains powerless.

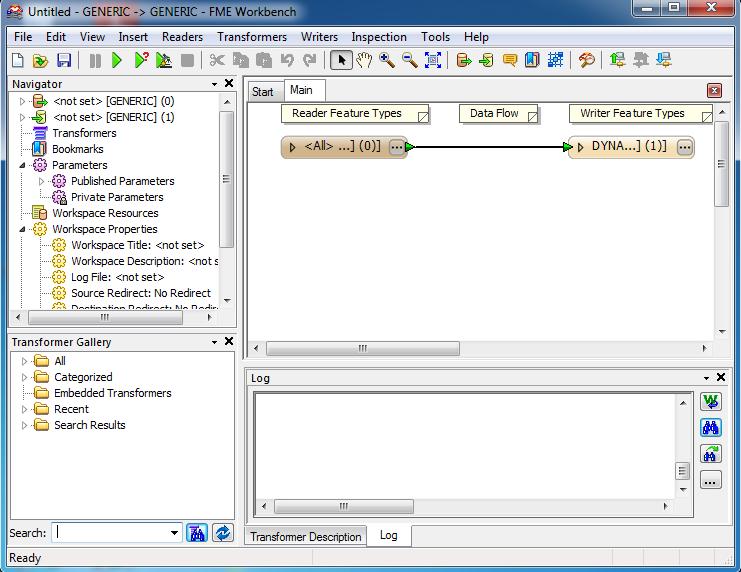

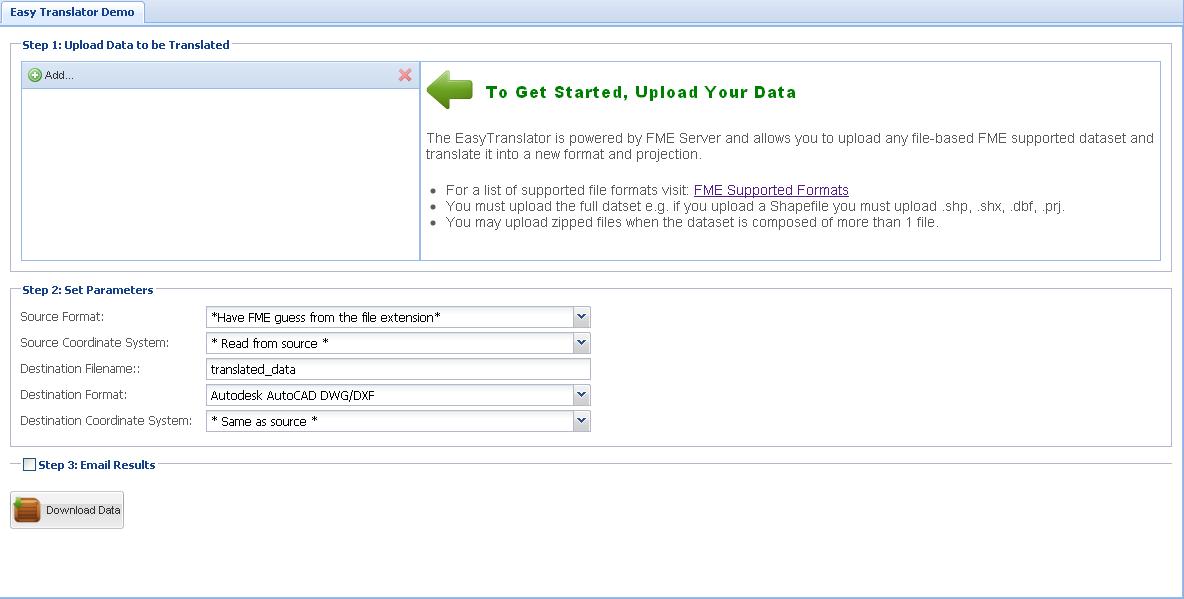

As of the 2010 version of FME, there are two technologies called Dynamic (schema detection) and Generic (Format detection) which battle these data villains. An example of what can be done in the extreme case where nothing is predictable is best demonstrated by the free EasyTranslator (safe.local/EasyTranslator) web service. This web service runs a trivial FME script that detects the format (generic technology) and the schema (dynamic technology) at run-time moving all the data to the destination data format of your choice (more info).

How useful is this trivial script? Surprisingly, quite useful actually! Pair it with a database of data and FME Server and you have a data distribution system that is impervious to the ShapeShifters and any database schema changes that they make.

How Much Predictability Do You Need?

As interesting as these two extremes are, things get better as you don’t have to decide between “static” and the “dynamic” but rather how much “dynamic” can I use or rather how much “static” do I need! The key breakthrough occurs when one realizes that translation scripts can be created anywhere on the continuum between the predictable and unpredictable.

With these technologies you can create scripts that are much more resilient by only depending on the attributes and geometry aspects that are absolutely necessary to get the job done. What about all the other information that is not necessary to get the job done? Keep it and move it to the destination or not. It is again up to you!

Let’s look back to the utility company with a variety of CAD variants and the company with different GIS files. Now, they are able to build scripts that handles all of the varieties by focusing on things that are common. The differences can be carried across either untouched by our dynamic technology, or dealt with through a combination of dynamic technology and the SchemaMapper.

Do you want learn more about the power of Dynamic and Generics and how they can help you work against data villains like the ShapeShifters and Doctor Arbitrary? If so, check out this page on fmepedia. Please do let me know how the battle against these evil data villains is going for you, and what tools you need to be successful.